Published dossier

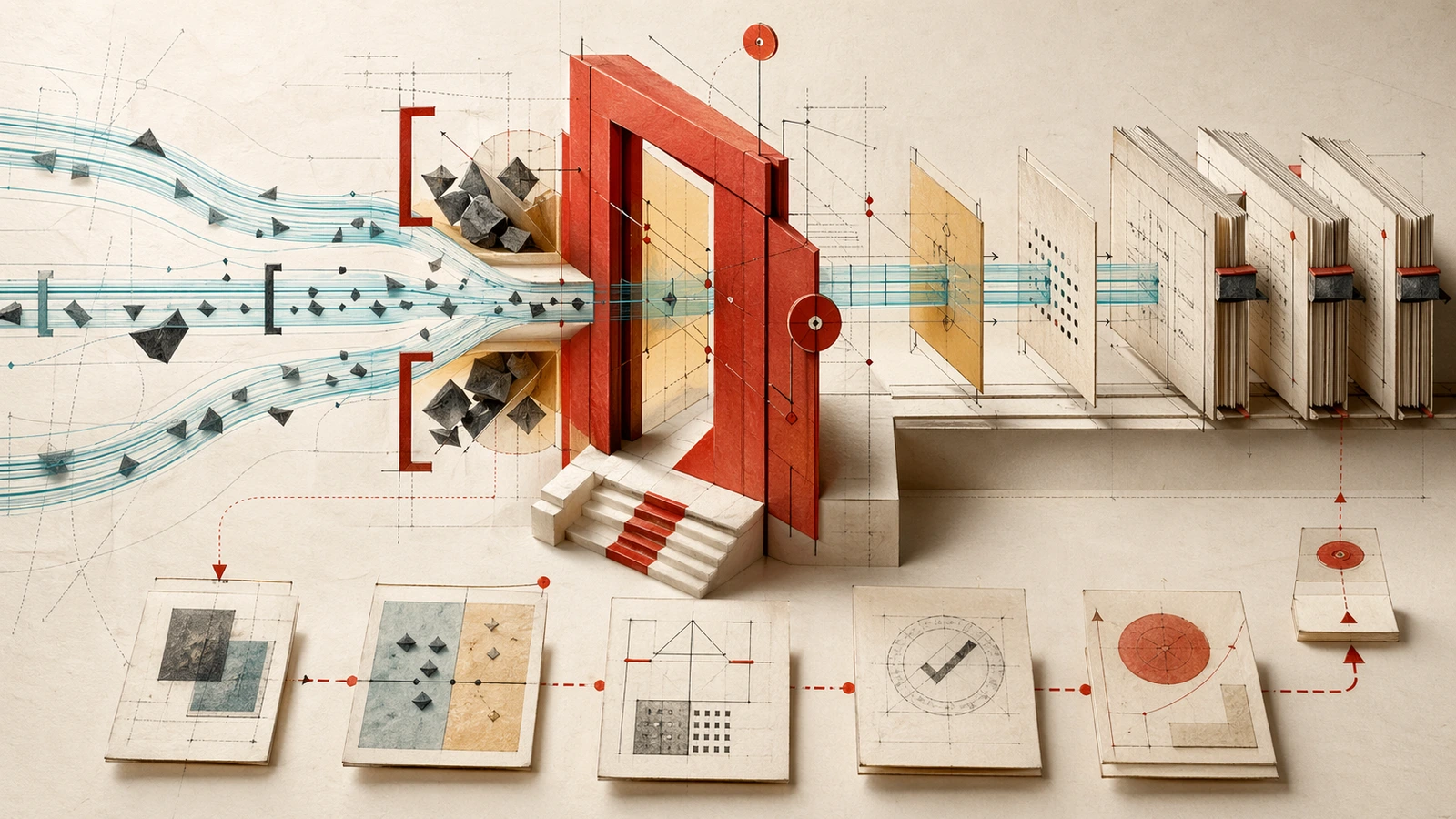

The Multi-Tool Governance Gap: Why 2026 AI Frameworks Fail Engineering Teams

Engineering teams orchestrate three or more concurrent AI tools — Cursor for refactoring, Claude Code for architectural changes, GitHub Copilot for autocomplete — within the same repository. Existing governance frameworks assume single-tool adoption, creating visibility blind spots.

Boards are increasingly asking about AI security and risk exposure rather than just adoption strategy. This shift signals that governance is no longer a compliance checkbox — it has become a C-suite priority requiring real answers. Frameworks like the NIST AI Risk Management Framework and ISO/IEC 42001 provide the baseline language boards now expect leaders to translate into engineering practice.

The problem is that engineering teams now deploy three or more concurrent AI tools within the same repository. Cursor handles complex refactoring, Claude Code manages architectural changes, and GitHub Copilot provides autocomplete assistance — all simultaneously. Existing governance frameworks assume single-tool adoption, creating visibility blind spots that leave organizations exposed to compliance failures and security incidents.

The Multi-Tool Reality

Teams don’t choose one AI tool anymore; they orchestrate multiple tools across different phases of the development lifecycle. This hybrid approach creates a fundamental mismatch with current governance models designed for monolithic AI adoption.

The consequence is clear: visibility gaps emerge where teams cannot trace which tool made which change, when agent-to-agent communication created emergent behaviors, or how policy enforcement translates across different orchestration paradigms.

Board Accountability in 2026

The evolution of board questions reveals a critical insight: adoption is no longer the challenge — security and risk exposure are. Organizations can point to high adoption, but boards now demand answers about what happens when those tools interact, when models drift over time, or when agent interactions may create complex behaviors requiring monitoring.

Governance frameworks must translate technical risk into executive-level metrics without requiring deep technical expertise from board members. This requires new visibility patterns that map tool-specific behaviors to business risk categories.

Orchestration Models: The Real Differentiator

All three major tools support agents in 2026, but their orchestration models differ fundamentally:

- Claude Code uses terminal-native named teammates and shared task lists

- Cursor uses IDE-native parallel cloud VM subagents

- GitHub Copilot uses GitHub-native workflows, including PR exploration and summaries directly inside pull request views

These differences have compliance implications for audit trails, policy enforcement, and incident response. A governance framework that works for terminal-based agents may fail completely for IDE-sandboxed subagents or GitHub-native workflows.

This is where a governance layer for AI coding agents becomes useful: not as a fourth coding assistant, but as the specification and auditability surface that can travel across terminal, IDE, and GitHub-native workflows.

That control-plane pressure is also why governance is becoming the new moat: once teams run multiple agents against the same repository, the differentiator is no longer raw coding capability but whether the organization can prove what happened.

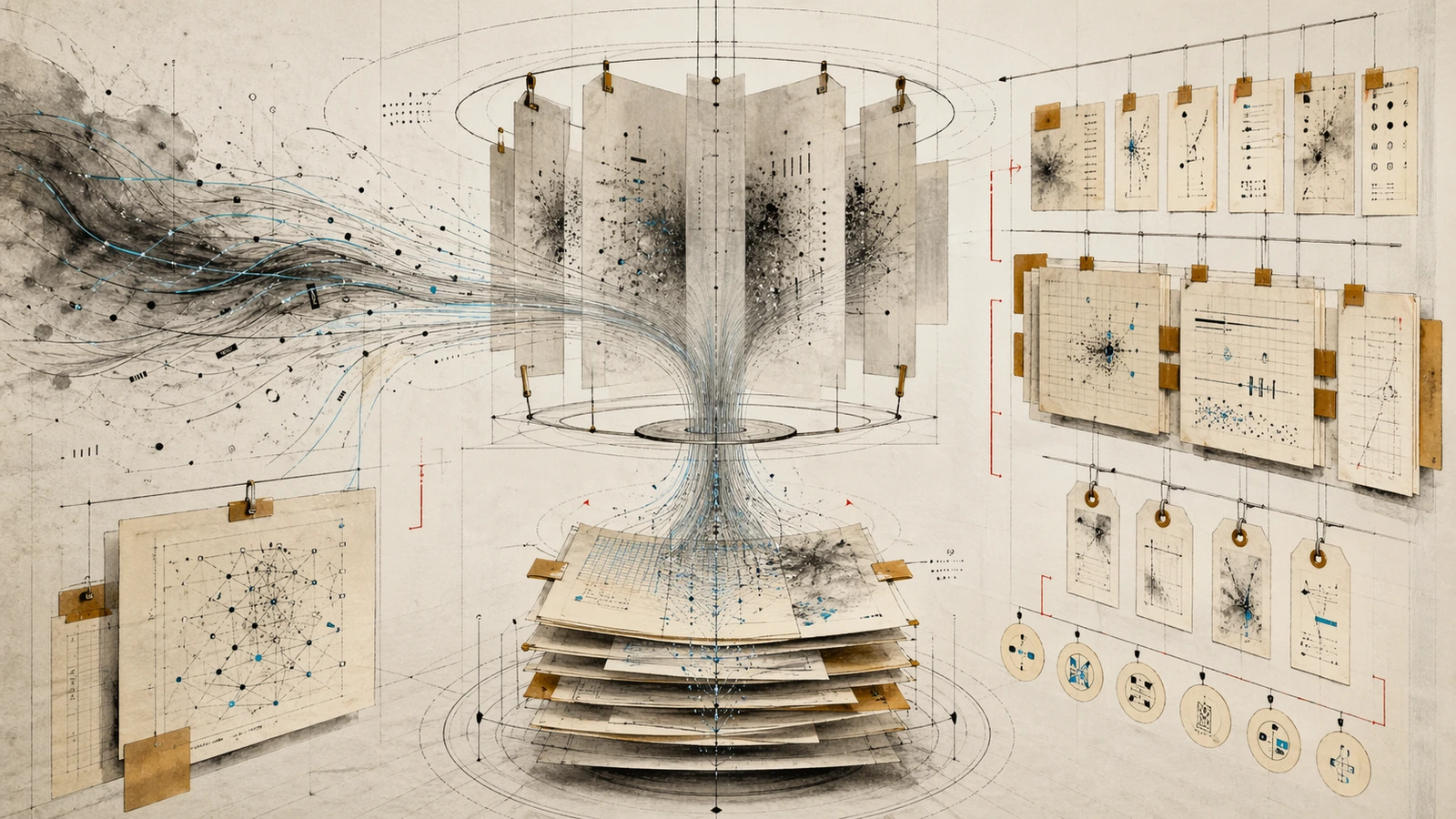

The Drift Problem

Model drift is a known governance risk, and multi-tool environments compound it when outputs from one tool become inputs to another. The OWASP Top 10 for LLM Applications provides a concrete risk taxonomy that engineering leaders can map into monitoring and controls across tools.

In a multi-tool environment, drift compounds across tool boundaries. When Claude Code’s teammates interact with Cursor’s subagents, the emergent behavior may exhibit different failure modes than either tool would show independently. Continuous governance must account for these cross-tool dynamics.

Agentic AI governance frameworks for 2026 emphasize multi-agent audit requirements and cross-tool visibility patterns as essential controls.

Recommendations

Engineering teams need adaptation strategies for hybrid tool stacks:

- Map tools to lifecycle phases: Define which tools govern which development stages and ensure governance policies follow those boundaries

- Define board-level visibility metrics: Create executive dashboards that translate technical risk into business impact without requiring deep technical expertise

- Address agent-to-agent emergent behaviors: Implement monitoring patterns that capture cross-tool interactions, not just individual model outputs

- Develop orchestration-aware governance patterns: Move beyond capability comparisons to frameworks that account for terminal vs IDE vs GitHub-native differences

The winners in 2026 won’t be teams with the most AI tools or the highest agent activity rates. They will be teams that treat orchestration, guardrails, and governance as an integrated system capable of handling multi-tool complexity.