See where AI coding creates risk, and how to control it.

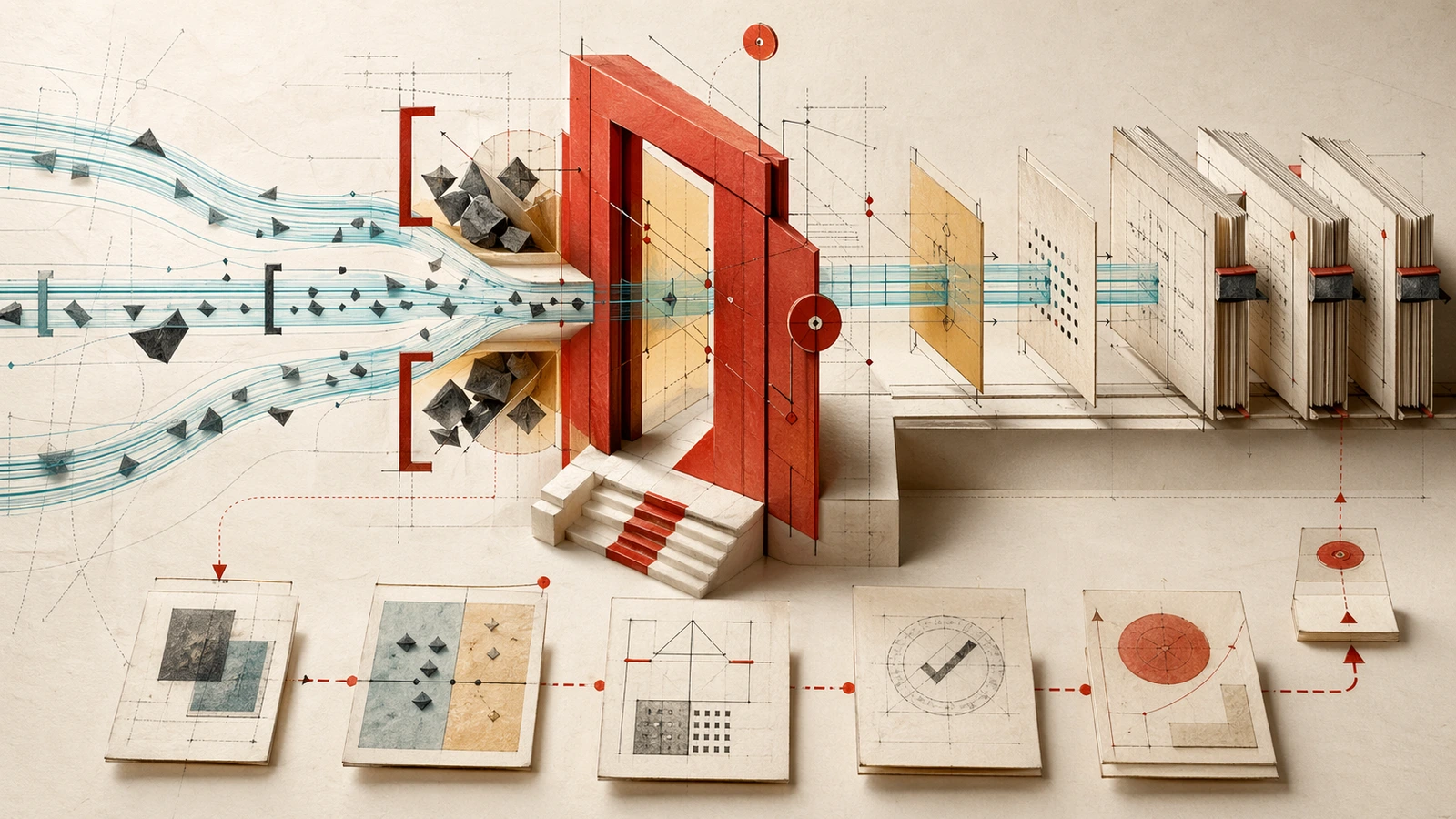

Source-grounded analysis for leaders who need traceable context, specification-driven work, audit trails, compliance evidence, and accountable generated code before it reaches production.

How do you prove what agents touched, why it changed, and who accepted the risk before it reaches production?

Practical lenses on context engineering, spec-driven workflows, audit trails, and the control surfaces needed around AI coding tools.

The risk to watch now

Start with the newest risk map for accountable AI coding.

More analysis

Recent AI coding governance dossiers

Context Engineering: The Missing Governance Layer for Enterprise AI Coding

AI-generated code is now 41% of codebases, yet most organizations have zero visibility into what AI coding tools read, write, and execute. Governance that inspects output after the fact is inspecting the wreckage — the missing layer is context engineering.

The August 2026 AI Governance Cliff

Eight weeks before the EU AI Act's high-risk enforcement deadline, engineering teams face a structural problem no compliance checklist can solve: AI coding assistants create code faster than human oversight can track.

The Governance Gap at 91% AI Adoption: Why 2026 Is the Inflection Point

91% of organizations now use AI coding tools, yet only a fraction operate at maturity levels where AI delivers compounding returns. The security data (45% vulnerability rate, 1-in-5 incidents) and regulatory deadline (EU AI Act August 2026) create concrete decision pressure for engineering leaders.

The Multi-Tool Governance Gap: Why 2026 AI Frameworks Fail Engineering Teams

Engineering teams orchestrate three or more concurrent AI tools — Cursor for refactoring, Claude Code for architectural changes, GitHub Copilot for autocomplete — within the same repository. Existing governance frameworks assume single-tool adoption, creating visibility blind spots.