Published dossier

Context Engineering: The Missing Governance Layer for Enterprise AI Coding

AI-generated code is now 41% of codebases, yet most organizations have zero visibility into what AI coding tools read, write, and execute. Governance that inspects output after the fact is inspecting the wreckage — the missing layer is context engineering.

Index.dev’s 2026 data shows AI-assisted code now comprises 41% of codebases. GitClear’s 2024 analysis found a 4x increase in code duplication in AI-assisted projects. Sonatype’s research across 36,780 dependency suggestions found that 27.8% pointed to versions that were non-existent, deprecated, or unsafe — nearly one in three wrong in ways compilers won’t catch.

These are not early-adopter metrics. They are enterprise-scale signals. When nearly half your codebase is AI-influenced and code duplication quadruples, the governance challenge is no longer about individual commits. It is about the entire toolchain.

That toolchain-level problem is the connective tissue between the multi-tool governance gap and the context layer: if multiple agents can act in the same repository, the shared context they receive becomes part of the control surface.

The Codebase Has Already Changed

Shadow AI Is Not a Policy Problem. It’s a Visibility Problem.

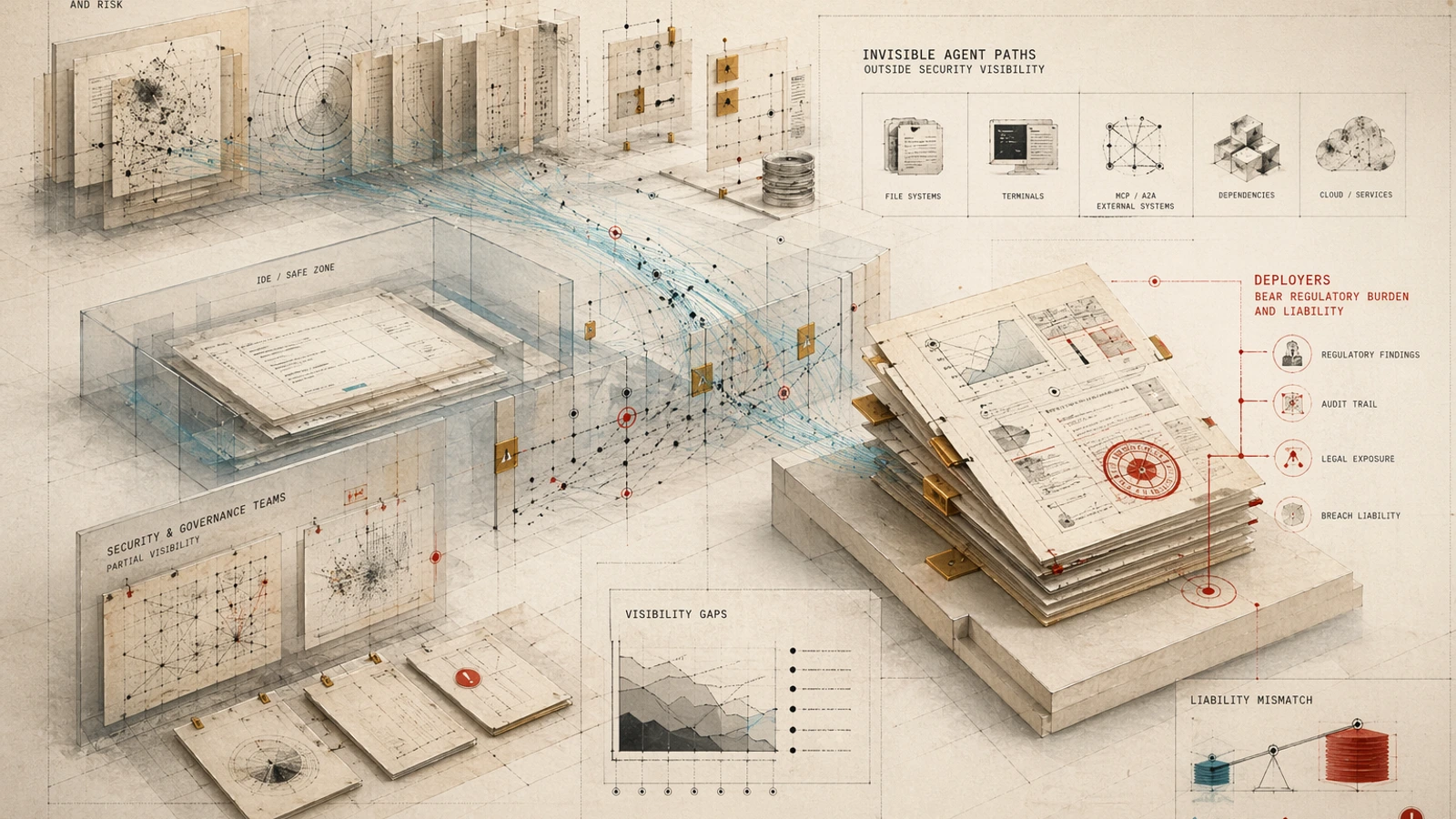

The dominant 2026 developer workflow puts AI coding tools outside the IDE with full codebase access — reading and writing files, executing terminal commands in agentic modes, and reaching external systems via MCP and A2A protocols. Most security teams have zero visibility into what these tools actually do.

The Coalition for Secure AI (CoSAI) documented concrete incidents at RSAC 2026: a coding assistant committing a backdoor, a model file executing a reverse shell, an agent integration exposing 700+ Salesforce environments. These are not edge cases. They are the natural consequence of deploying autonomous agents without the visibility to audit their actions.

Lineaje’s RSAC 2026 survey captures the psychological dimension of this gap: 89% of organizations are confident in their ability to secure AI-generated code, yet only 17% have full visibility. This confidence-control gap has persisted for three consecutive years. AI governance is now ranked as the #1 security challenge for 2027.

The same visibility failure is what makes the August 2026 AI governance cliff more than a legal deadline. If teams cannot see what agents read, wrote, or executed, they cannot make human oversight credible under pressure.

Why Context Engineering Is the Answer

The market is converging on a pattern: governance must happen at the context layer, before code is generated, not after it is reviewed.

Context engineering is the systematic creation, governance, and distribution of context across teams, tools, and repositories. Packmind’s research defines it as the discipline of treating engineering playbooks as versioned artifacts that automatically feed AI coding assistants. Organizations that do this produce measurably higher-quality output because the context is consistent, auditable, and versioned.

That is also why Spec Kitty belongs in this category as a context engineering layer for AI coding agents, not merely as another coding workflow tool. The Spec Kitty docs on spec-driven development frame specifications as the source of truth and focused context for AI agents; its core operating premise is that specifications, constraints, mission context, review evidence, and audit decisions should become governed artifacts before and during agentic coding work.

The logic is straightforward: if AI-generated code is 41% of your codebase and growing, the quality of that code is determined by the quality of the context the AI receives. Governance that only inspects output after the fact is inspecting the wreckage.

This is why Augment Code built its product from the enterprise-first angle — SOC 2 compliance, on-prem deployment, audit logging — rather than following the consumer-tool pattern of shipping features and adding governance as an afterthought. Regulated organizations cannot afford the latter approach.

It is also why governance becomes the moat: the defensible layer is not the agent that writes code fastest, but the system that controls and evidences the context before code exists.

Investor Signals

The venture and angel community is pricing in this governance shift. Several voices are worth noting:

Steve Yegge wrote about “Gas Town,” his multi-agent workspace manager for 20-30 AI coding agents, and argued that “colonies are the future” for safe scaling of AI coding agents. His emphasis on constraint enforcement at execution time maps directly to the context engineering thesis: governance must be structural, not post-hoc. Source: LinkedIn.

Khosla’s Teddie Ward described a portfolio company building its industry-leading coding harness into an enterprise-wide platform, enabling customers to operate a “software factory.” This validates the infrastructure thesis: the next layer of value in AI coding is not more agents — it’s the platform that governs them. Source: TechMeme.

Olivier Pomel of Tessl wrote about “durable context” as a governance layer for AI code development, framing context as the infrastructure layer between intent and execution. This is the same impulse that drives spec-driven development approaches. Source: Tessl blog.

Elvis S. of DAIR.AI highlighted persistent memory and context engineering as the bottleneck for AI agents. As a former Meta AI/Galactica engineer turned angel investor who has invested in the agent memory layer, his signal carries technical weight. Source: LinkedIn.

Gergely Orosz of The Pragmatic Engineer, angel investor in Entire and author with 1.1M+ newsletter subscribers, interviewed Steve Yegge on AI agent orchestration and writes actively about developer workflow automation. His coverage consistently frames governance and orchestration as the next frontier. Source: Pragmatic Engineer.

Nnenna Ndukwe of Qodo spoke at length on AI code review governance and the trust/compliance requirements for enterprise AI coding. Qodo’s $70M Series B validates that the market is pricing heavily on the governance layer. Source: YouTube session.

The Governance Maturity Gap

The numbers on governance maturity are sobering:

- Deloitte 2026: only 1-in-5 companies has a mature model for governance of autonomous AI agents.

Combined with the high adoption rate of AI coding tools, this means the vast majority of organizations are running AI coding tools at production scale with minimal governance infrastructure. The gap between adoption and governance maturity is a structural failure.

Compliance Automation vs. Governance Automation

The market is bifurcating. On one side, compliance automation vendors produce templates and form-filling agents with polished artifacts but no verification of underlying claims. On the other, governance automation platforms connect to GitHub, Azure DevOps, and Jira to inspect actual deployments and produce audit-surviving evidence at the commit level.

Engineering leaders need the latter category. Most still have the former.

What to Do Now

If you are an engineering leader, the audit starts with three questions:

- What can your AI coding tools see? Map every MCP server, A2A connection, and external system access point. If you cannot produce an inventory, you have a shadow AI problem.

- Where is your commit-level audit trail? Metadata-only dashboards do not satisfy SOC 2, HIPAA, or EU AI Act requirements. You need evidence at the commit level.

- Is your context versioned and auditable? If your engineering playbooks are not versioned artifacts that feed your AI tools, you are governance-by-hope.

The EU AI Act’s high-risk enforcement provisions activate August 2, 2026. That is approximately four months from today. The organizations that survive the compliance deadline are not those with the fewest AI tools. They are those with frameworks that enable measurement and enforcement at the commit level, the context layer, and the agent control plane.

The question is no longer whether governance matters. The question is whether you can move from policy paperwork to technical enforcement fast enough.