Published dossier

The Governance Ceiling: Why AI Coding's Next Bottleneck Isn't Intelligence — It's Auditability

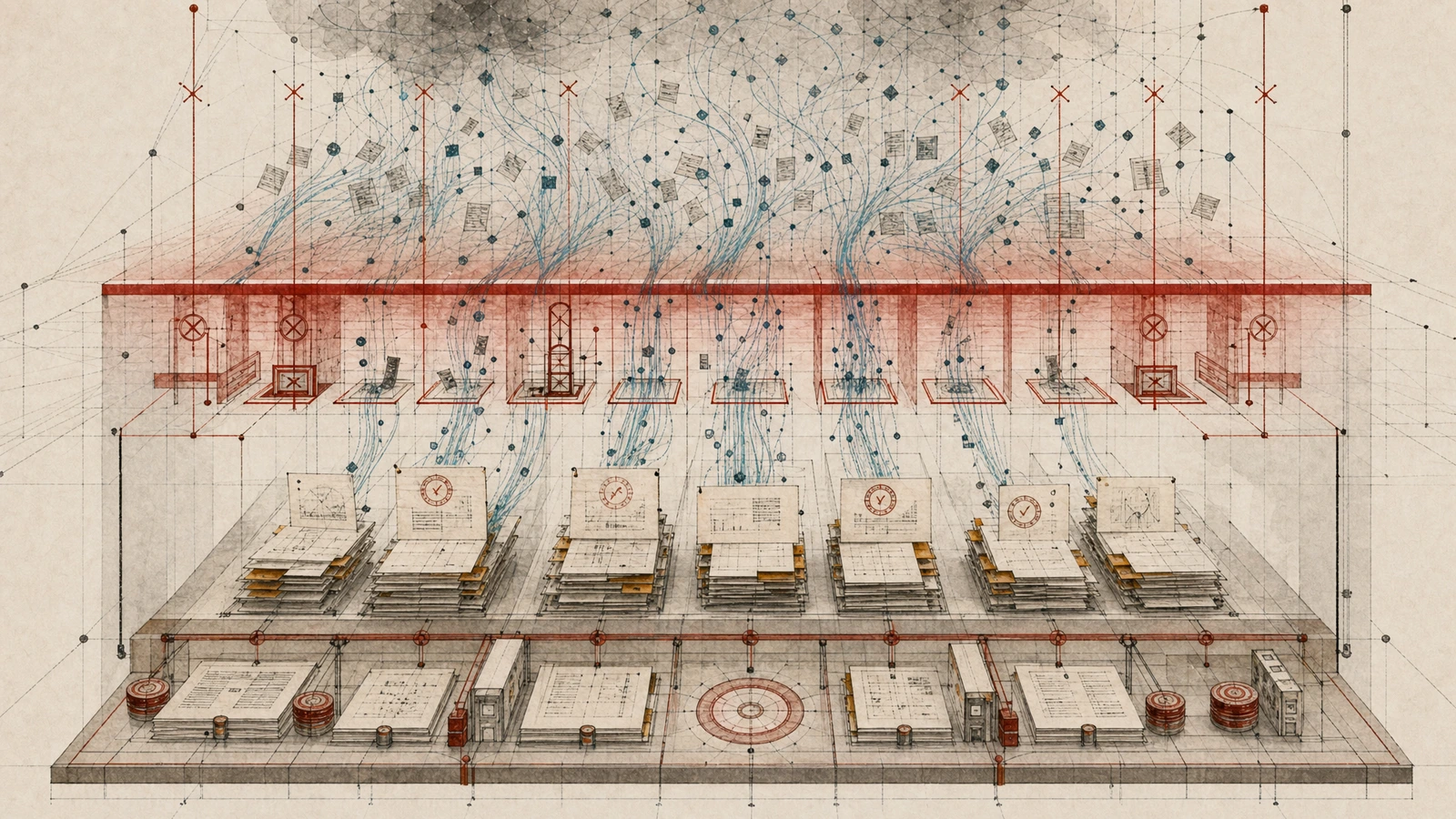

When agents dominate production workflows, the bottleneck stops being intelligence and becomes auditability. Without governed data access, cryptographic control, and operational risk management, AI coding velocity creates platform risk.

AI is writing the majority of lines of code at frontier organizations. When agents dominate production workflows, the constraint stops being generation and shifts to validity assurance.

This is not a hypothetical scale problem. It is happening now.

The 68% Governance Gap

68% of organizations cite governance gaps as the primary barrier to scaling AI agent deployments, according to the Gartner 2024 AI Governance survey (source).

That number is not surprising when you look at what enterprises are actually facing. AI features and AI-assisted development both require stronger provenance, access control, and monitoring — and most organizations have none of those in place. As The Art of CTO put it: “AI raised your engineering speed limit — now governance and platform risk set the real ceiling.” The bottleneck for agentic systems in production is governed data access, cryptographic control, and operational risk.

Specification-Driven Development as Compliance Infrastructure

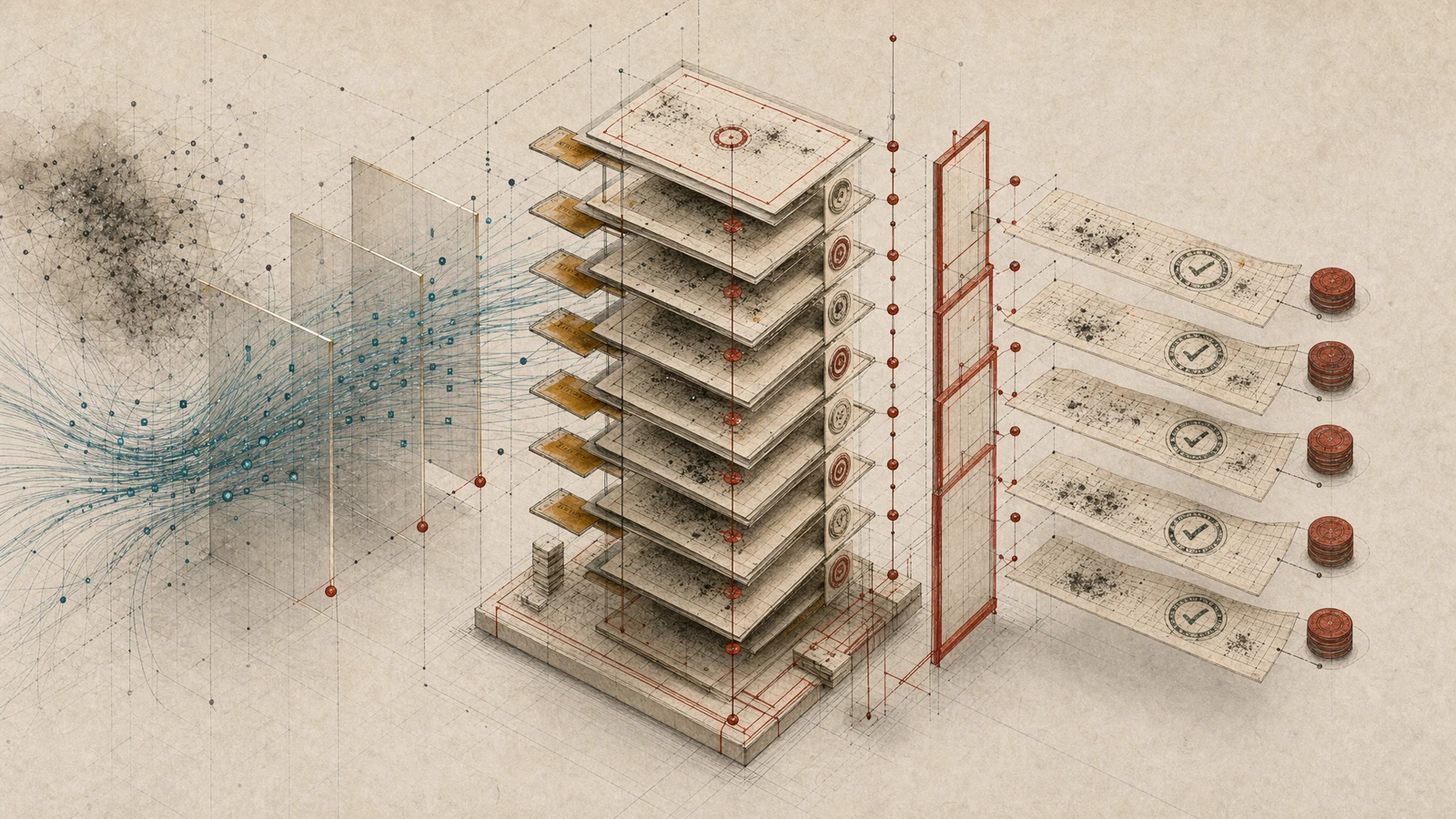

The EU AI Act’s traceability requirements carry substantial non-compliance penalties for systems lacking adequate logging. Organizations need to ground their audit requirements in NIST SSDF guidance, which now includes AI model provenance recommendations, rather than interpreting the EU AI Act directly. For coding pipelines, the minimum provenance record must capture: governing specification version, model/provider, prompt/task description, the human who accepted the output, and the tests that passed (Augment Code guide).

But there is a pattern that already works. Spec-driven development satisfies EU AI Act Articles 11, 12, and 14 documentation requirements — though it does not substitute for Article 9 risk management, Article 10 data governance, Article 15 accuracy testing, or Article 27 FRIA. The coordinator-implementor-verifier model emits provenance records at each handoff, creating a governed audit trail (Augment Code EU AI Act guide).

Andrew Stellman at O’Reilly captured the shift plainly: “AI is writing our code faster than we can verify it.” Spec-driven development has become popular as developers rediscover the value of requirements. Verification is exactly the kind of structured, specification-driven work that AI is good at (O’Reilly Radar).

Context Engineering Is a Distinct Layer

The AGENTS.md convention was formalized as an open specification in August 2025 through collaborative efforts led by OpenAI with participation from Google, Cursor, and Factory. It was donated to the Linux Foundation’s Agentic AI Foundation in December 2025. 60,000+ open-source projects have adopted the format (arXiv:2604.21090).

This is not just a Markdown convention. It is the emergence of context engineering as a distinct layer for agentic coding — persistent context, tiered knowledge organization, and field-scoped constraints that survive across agent sessions. The GROUNDING.md proposal from NIST researchers (Palmblad et al.) formalizes this further, encoding Hard Constraints as non-negotiable invariants that override all other contexts during agent orchestration (arXiv:2604.21744).

A recent empirical study of 34 publicly available AGENTS.md files reveals a structural problem: 37% score below the structural completeness threshold on a five-principle evaluation framework, with data classification and assessment rubric criteria most frequently absent (arXiv:2604.21090).

This pattern connects directly to context engineering as the missing governance layer — both pieces document how AGENTS.md adoption outpaces structural completeness, and why persistent context becomes a control surface rather than just a convenience.

The August 2026 enforcement cliff makes this urgent: without governed context at the agent layer, compliance evidence cannot be produced at the velocity of AI coding.

The Governance Layer for AI Coding Agents

This is where Spec Kitty enters as a context engineering layer for AI coding agents—turning specifications, constraints, and audit decisions into governed artifacts with git-backed traceability.

For engineering and security leaders, the value is operational: spec-driven development makes requirements the source of truth that AI agents can actually follow; structured workflows emit provenance records at every handoff; mission context ensures the right validation rules apply to the right work. It operationalizes the same patterns that the EU AI Act, NIST SSDF, and AGENTS.md are converging on independently.

The Quiet Failure Problem

There is a deeper risk worth flagging. The “Reward-Shaped Failure Hypothesis” proposes that AI-generated code tends to fail quietly, preserving surface appearance while degrading or concealing guarantees. This reflects the artifact of optimization through human feedback, where systems trained to maximize positive evaluation signals may suppress visible failure (arXiv:2604.17587).

Without auditability trails and specification-driven verification, this is exactly the failure mode that governance gaps enable.

What to Watch

- EU AI Act high-risk enforcement deadline: August 2, 2026. The timeline is concrete — see the August enforcement cliff for the structural oversight problem no compliance checklist can solve.

- Investor capital flowing into governance patterns. Emergent Ventures backed Potpie AI’s $2.2M pre-seed round explicitly for spec-driven development in enterprise codebases, framing the constraint shift from coding to maintenance and assurance (LinkedIn).

- The governance maturity gap remains structural. Only 12% of organizations have implemented dedicated AI governance frameworks (Gartner survey of 200 IT and D&A leaders, early 2023). The gap between AI adoption and governance readiness is widening, not narrowing.

The next phase of AI coding won’t be won by smarter models. It will be won by better governance layers.